Generative AI Garden

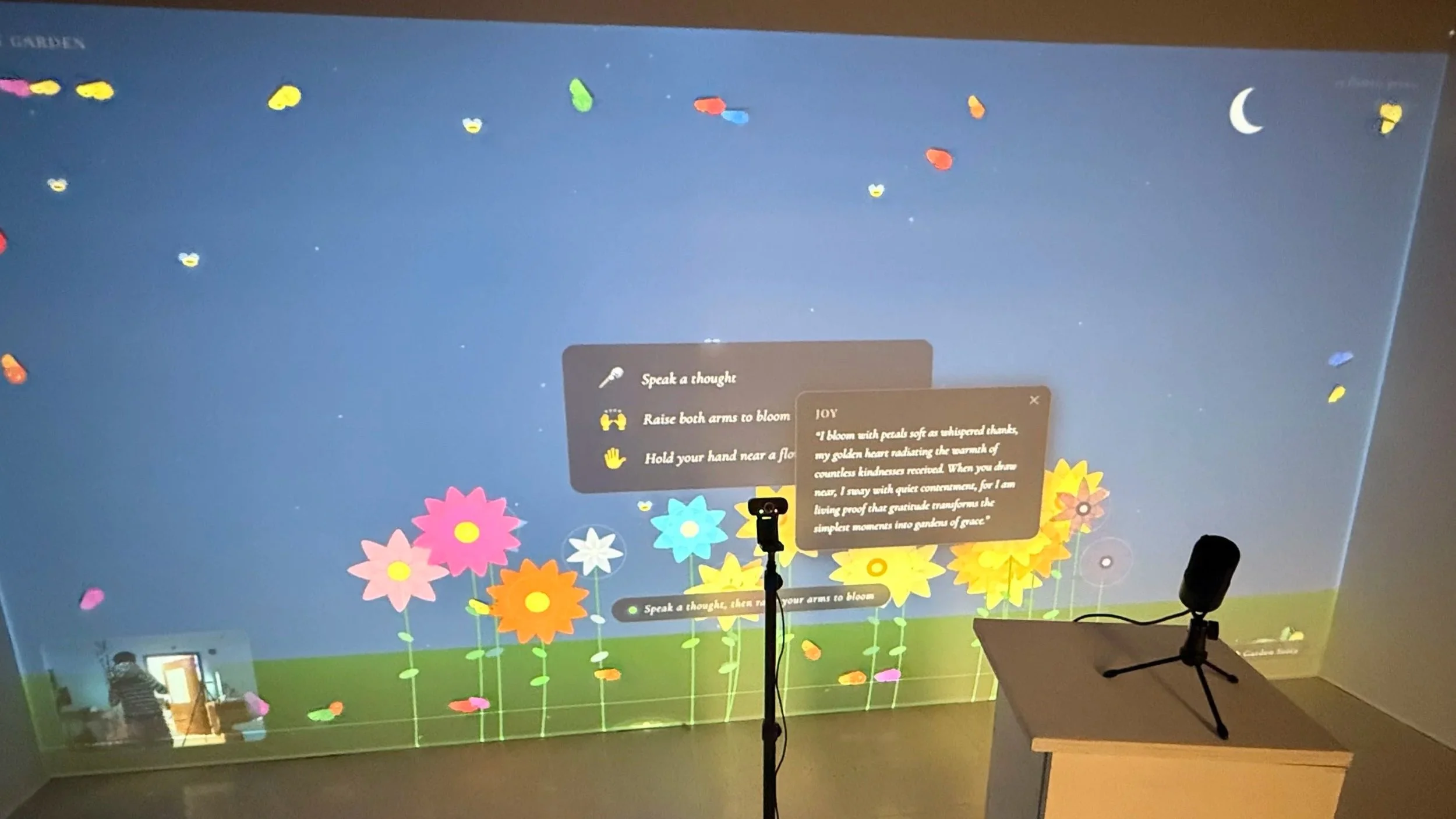

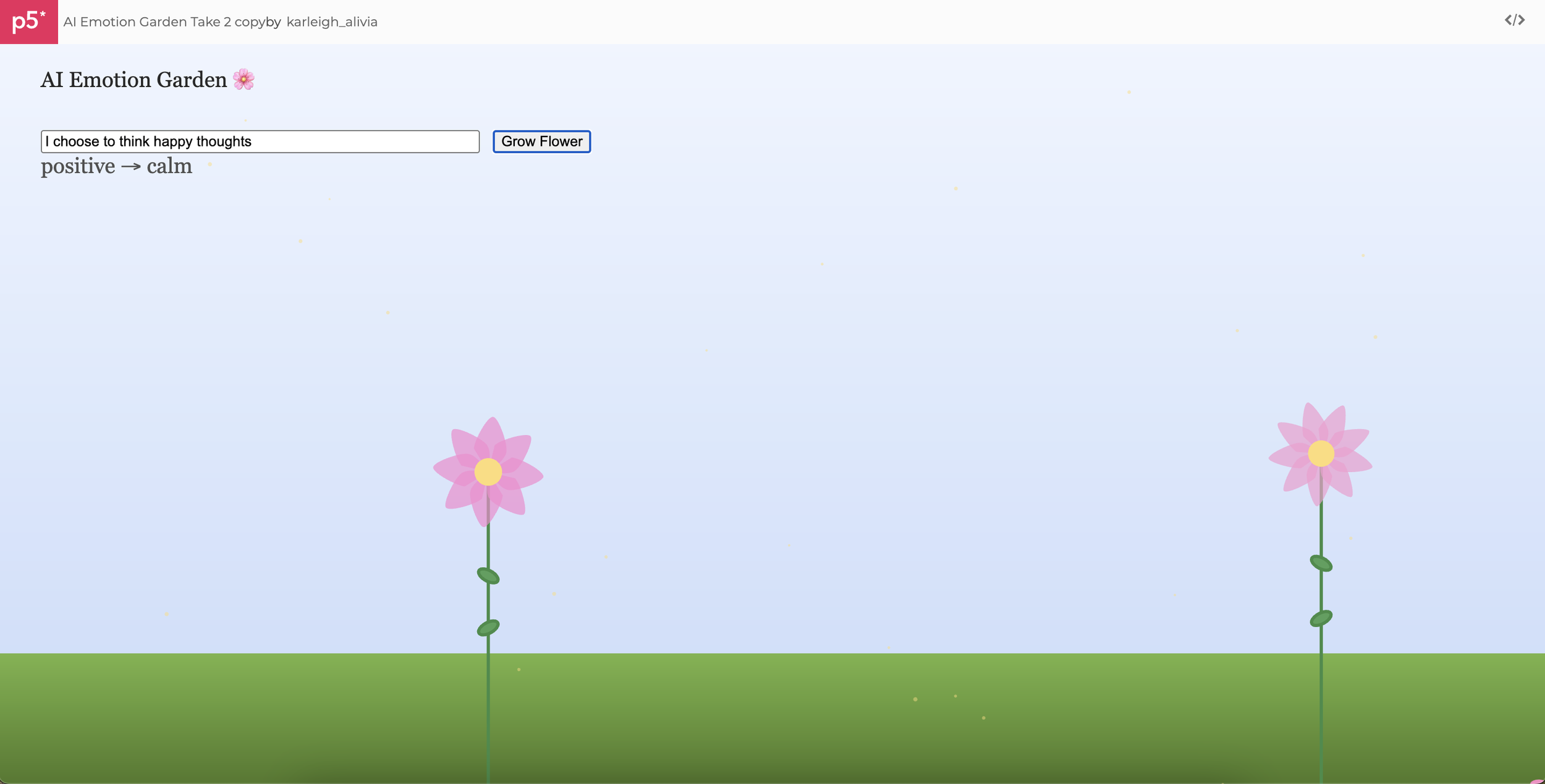

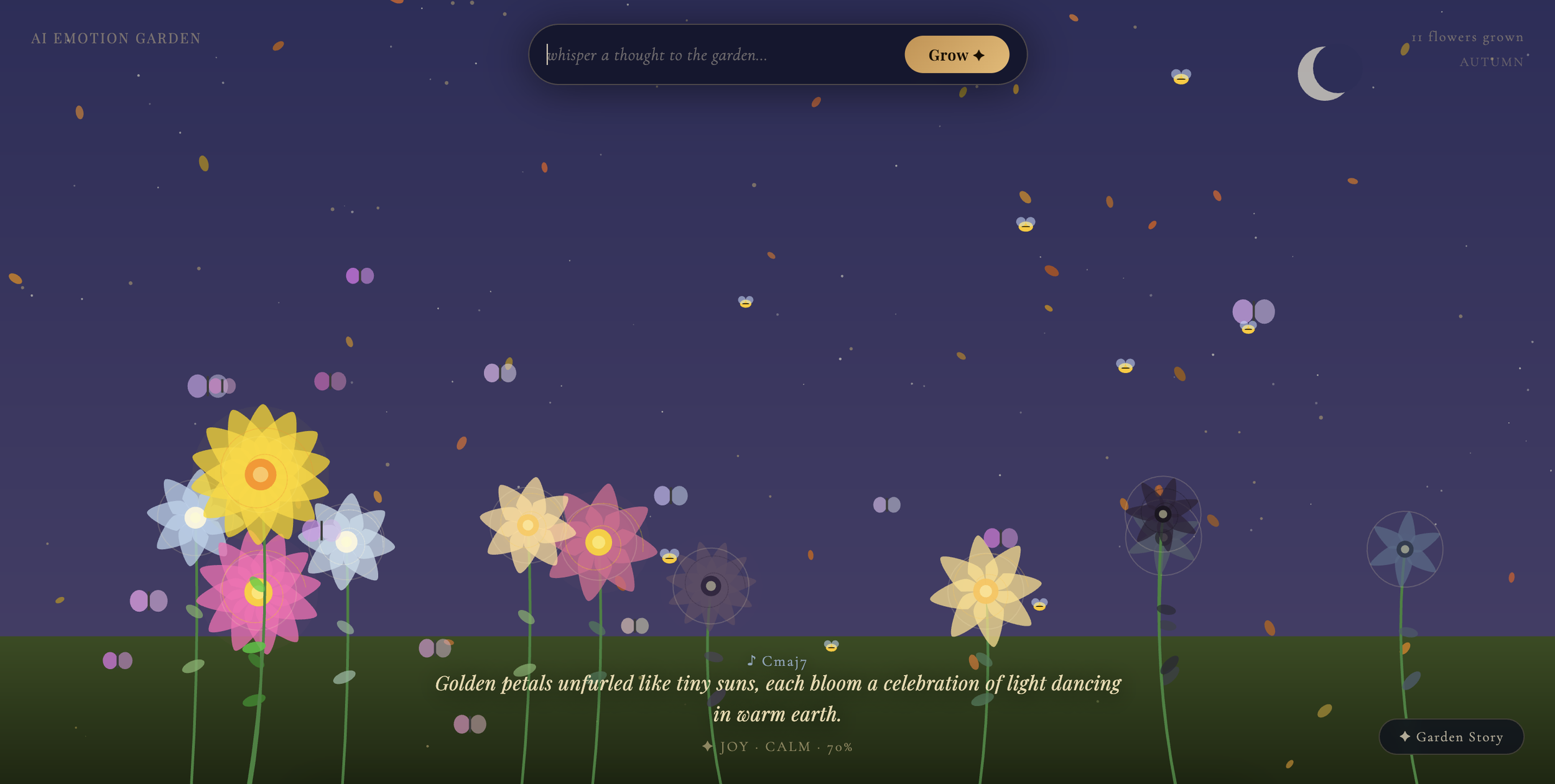

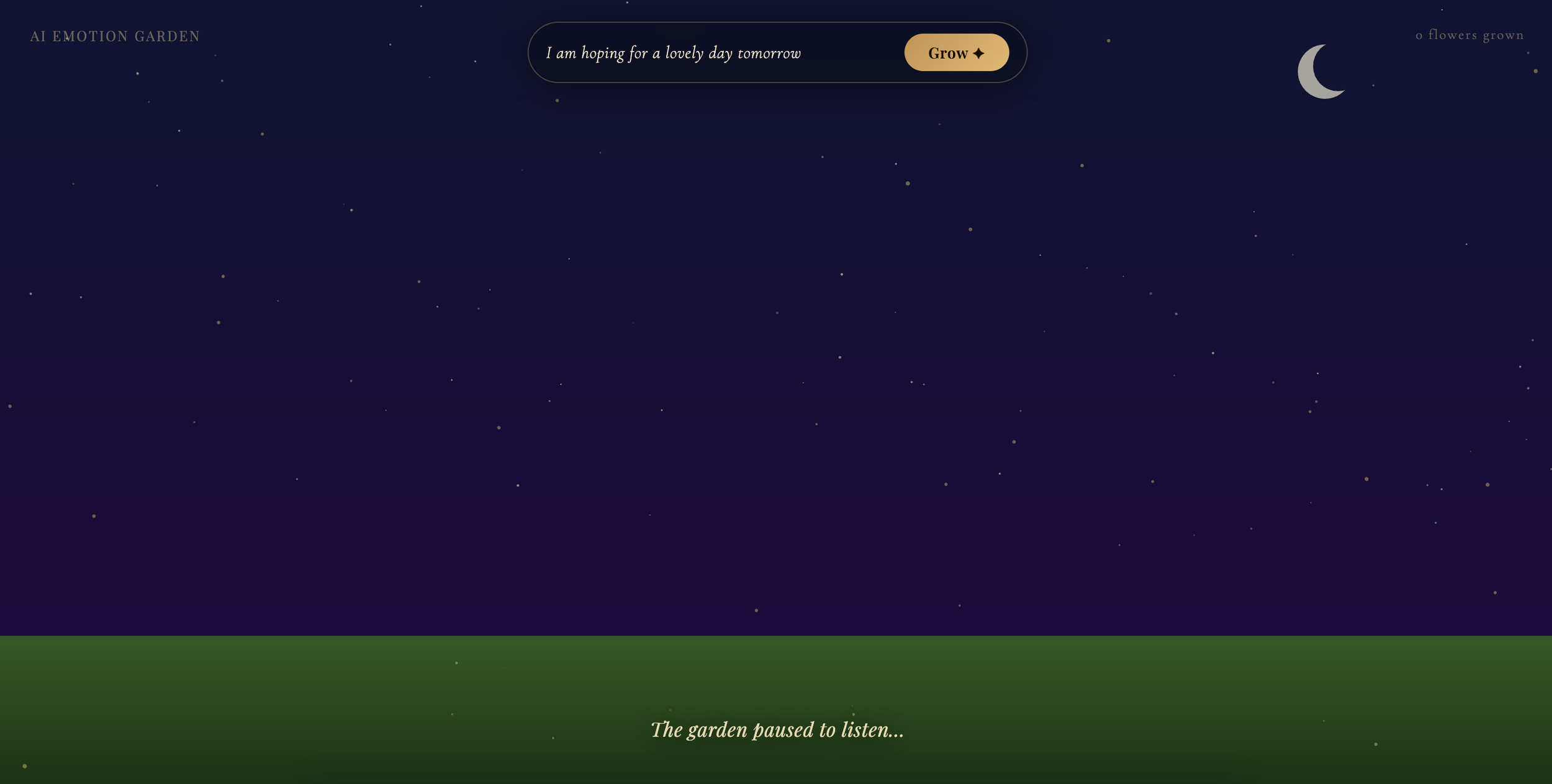

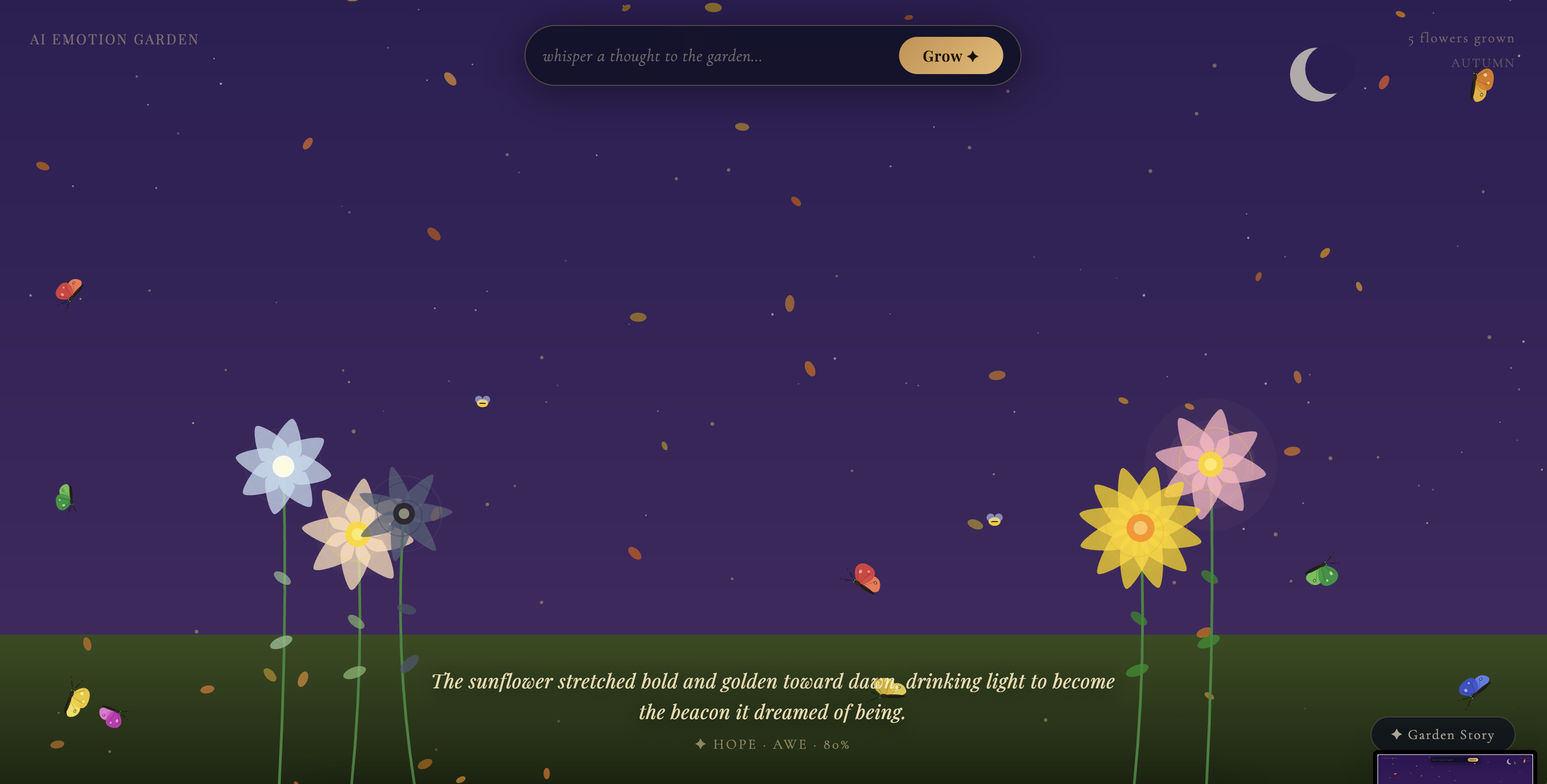

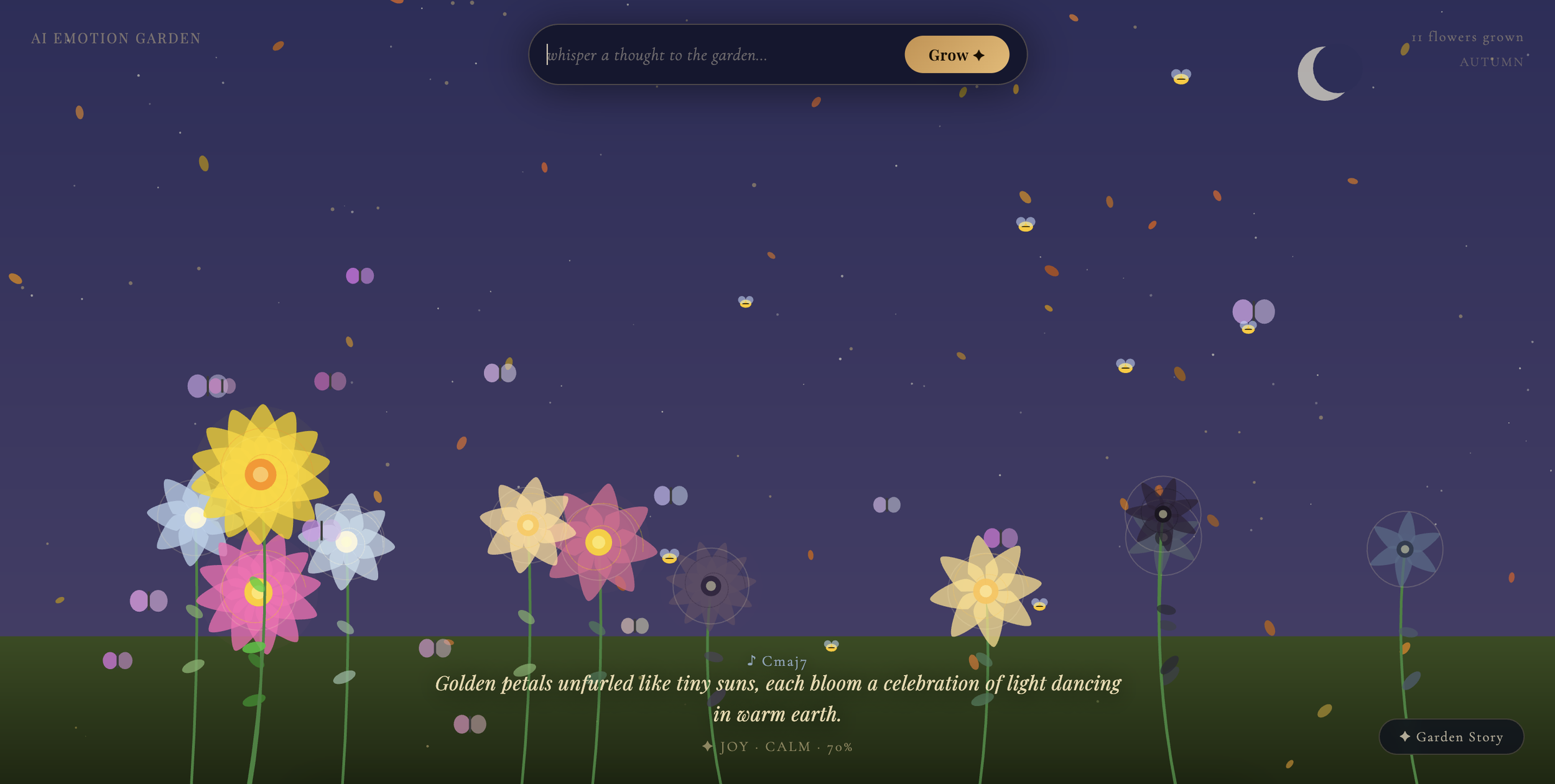

AI Emotion Garden is an interactive generative art installation that grows a living digital garden from human emotion. Visitors speak a thought, feeling, or single word aloud, and the garden responds in real time. A unique flower blooms, the sky shifts, insects appear, and the soundscape evolves, all shaped by artificial intelligence. Every element of the garden is a direct expression of the emotional content the AI detects in the visitor's words. No two flowers are alike, and no two garden states are the same. The piece explores the intersection of language, emotion, and generative form. It asks what it might look like if a garden could truly listen, not just react to input, but interpret it, feel it, and grow from it. Each bloom is not simply triggered by a word but designed by an intelligence that reads the mood, intensity, and nuance behind that word. The garden accumulates over time, becoming a living record of every thought whispered into it, an emotional landscape authored collectively by everyone who participates.

-

Visual Studio Code, Claude AI, Anthropic API

-

Interactive Design, Generative AI Art, Sentiment Analyzation

-

Josh Miller

Project Goals

-

1. Make AI feel intimate rather than transactional

Most interactions with artificial intelligence feel like using a tool: input goes in, output comes out. This project set out to design an experience where the AI feels more like a collaborator or witness than a system. The goal was for visitors to leave feeling that something genuinely listened to them, interpreted them, and responded with care, not just processed their words and returned a result.

-

2. Bridge the physical and the generative

The second goal was to remove all traditional interfaces from the experience. No screens to tap, no keyboards to type on, no buttons to press. By using voice, body presence, and gesture as the only inputs, the installation invites visitors to interact with a digital world the same way they move through a physical one, naturally and intuitively. The projection onto a wall was central to this: the garden exists in the room, not inside a device.

-

3. Create a Living Archive of emotion that people can experience

The third goal was for the garden to accumulate meaning over the course of an exhibition. Each flower is a permanent record of a specific person's thought at a specific moment. As more visitors participate, the garden grows denser, more varied, and more complex. The piece is never finished. It is always mid-bloom, always becoming, shaped entirely by the emotional lives of the people who encounter it.

What’s Generative AI & What’s Coded?

The AI Emotion Garden lives at the intersection of three layers: intelligence that interprets, code that renders, and bodies that activate. None of the three works without the others.

-

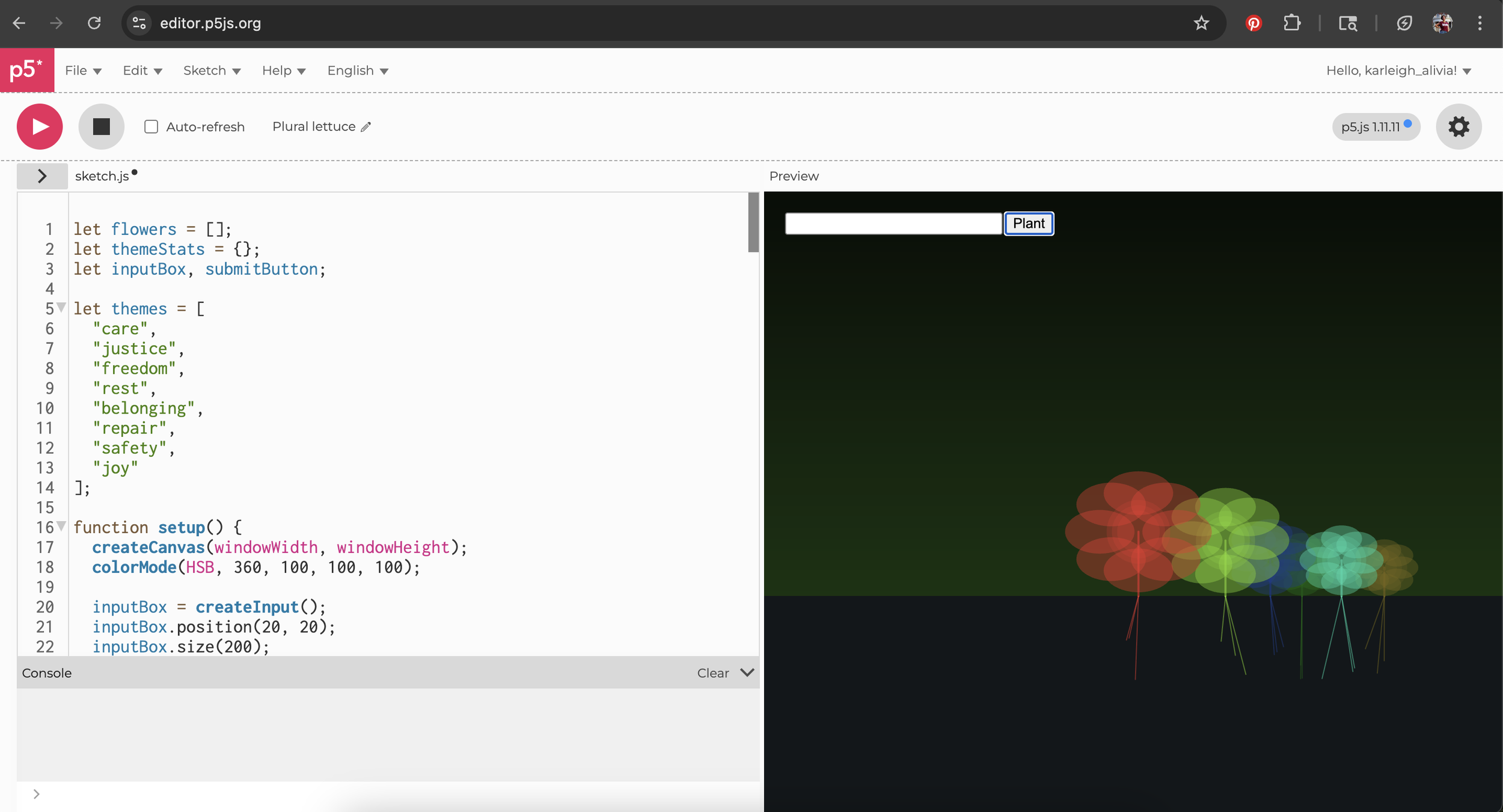

Emotion analysis (primary emotion, intensity, mood keywords, sentiment)

Flower DNA design (petal count, shape, color, glow, stem curve, spiral detail)

Poetic bloom caption written fresh for each flower

Sky and atmosphere design (color, wind strength, rain)

Musical chord composition played on each bloom

Flower voice (poetic first-person response when a flower is approached)

-

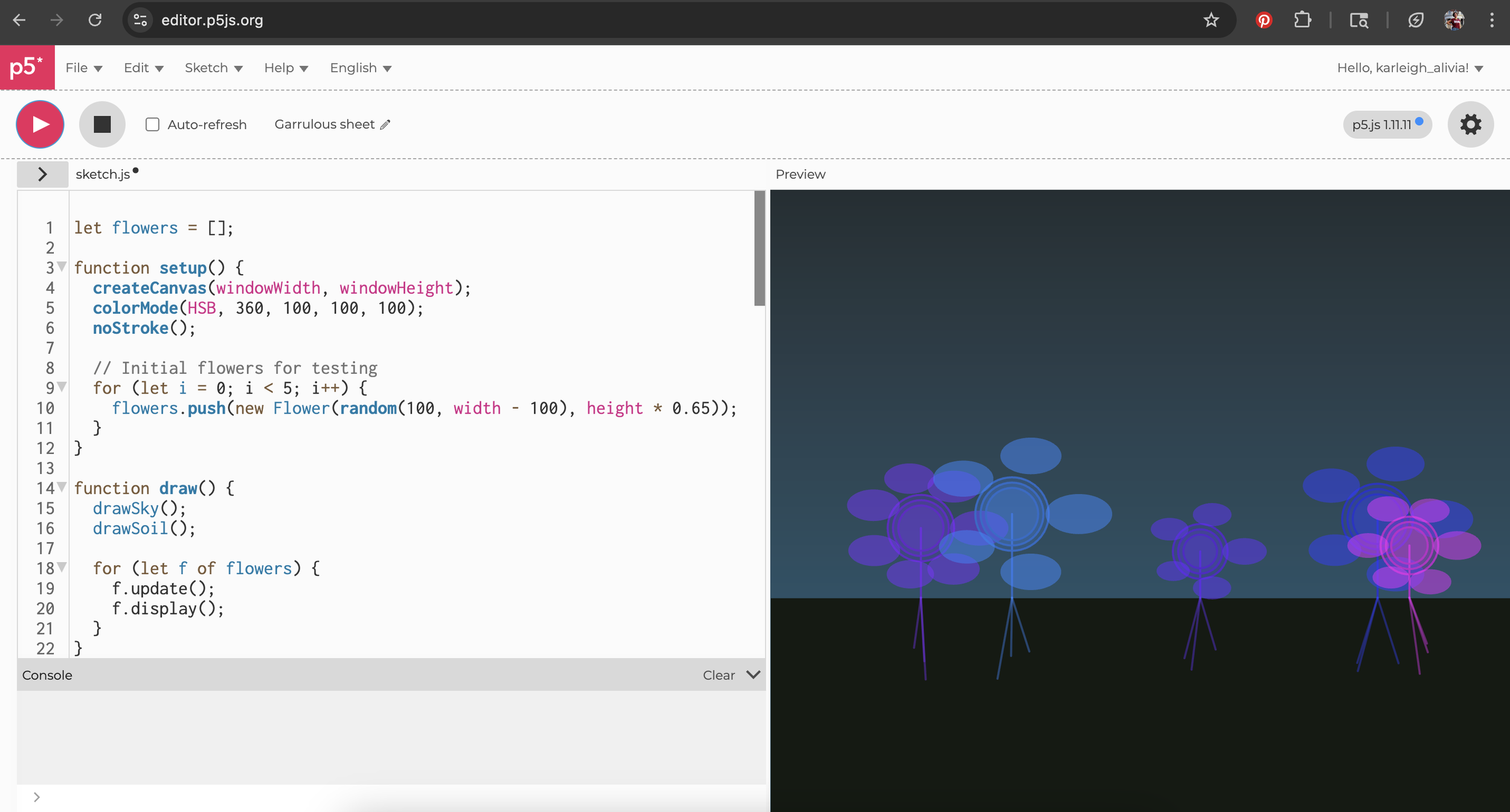

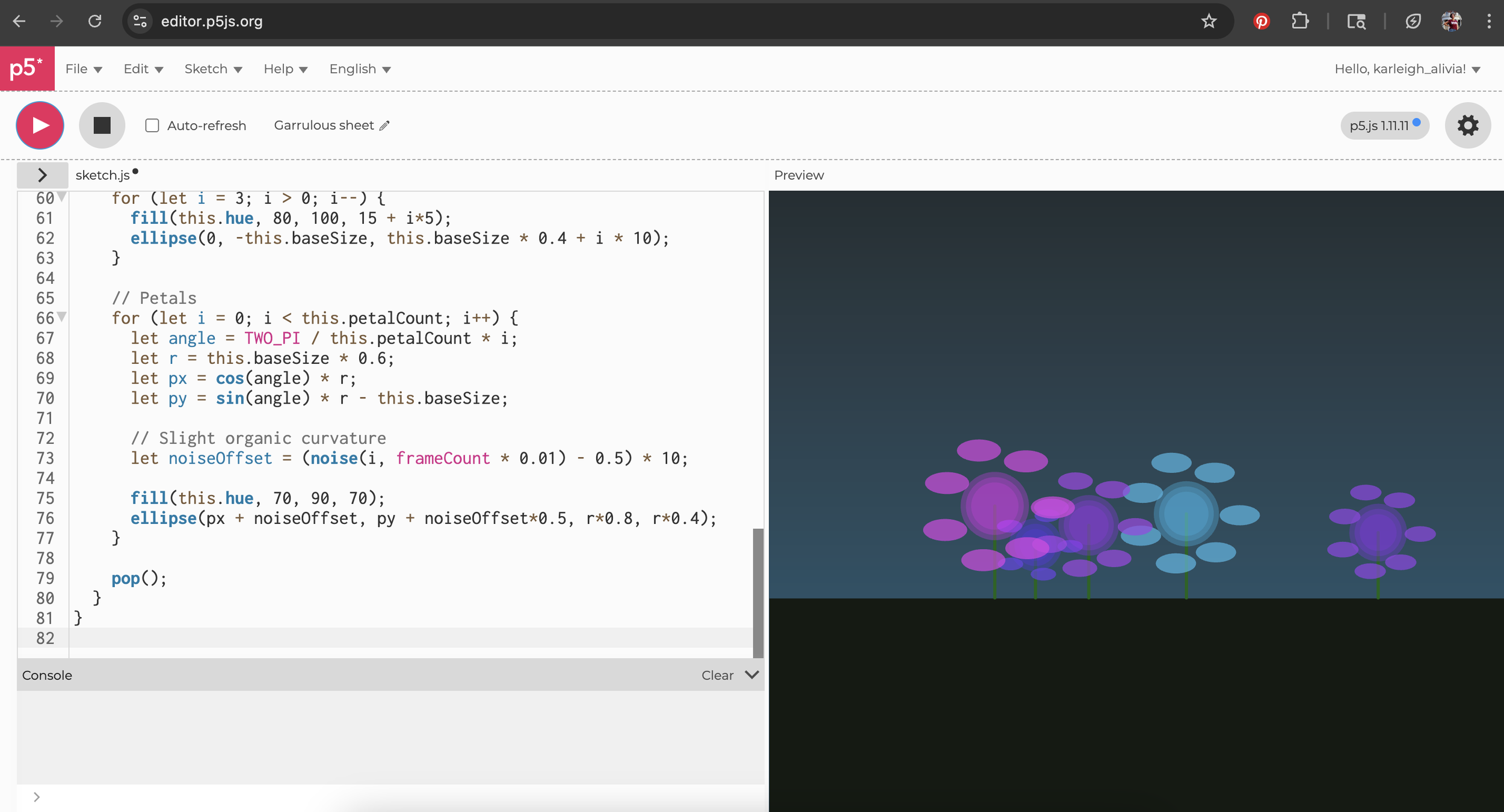

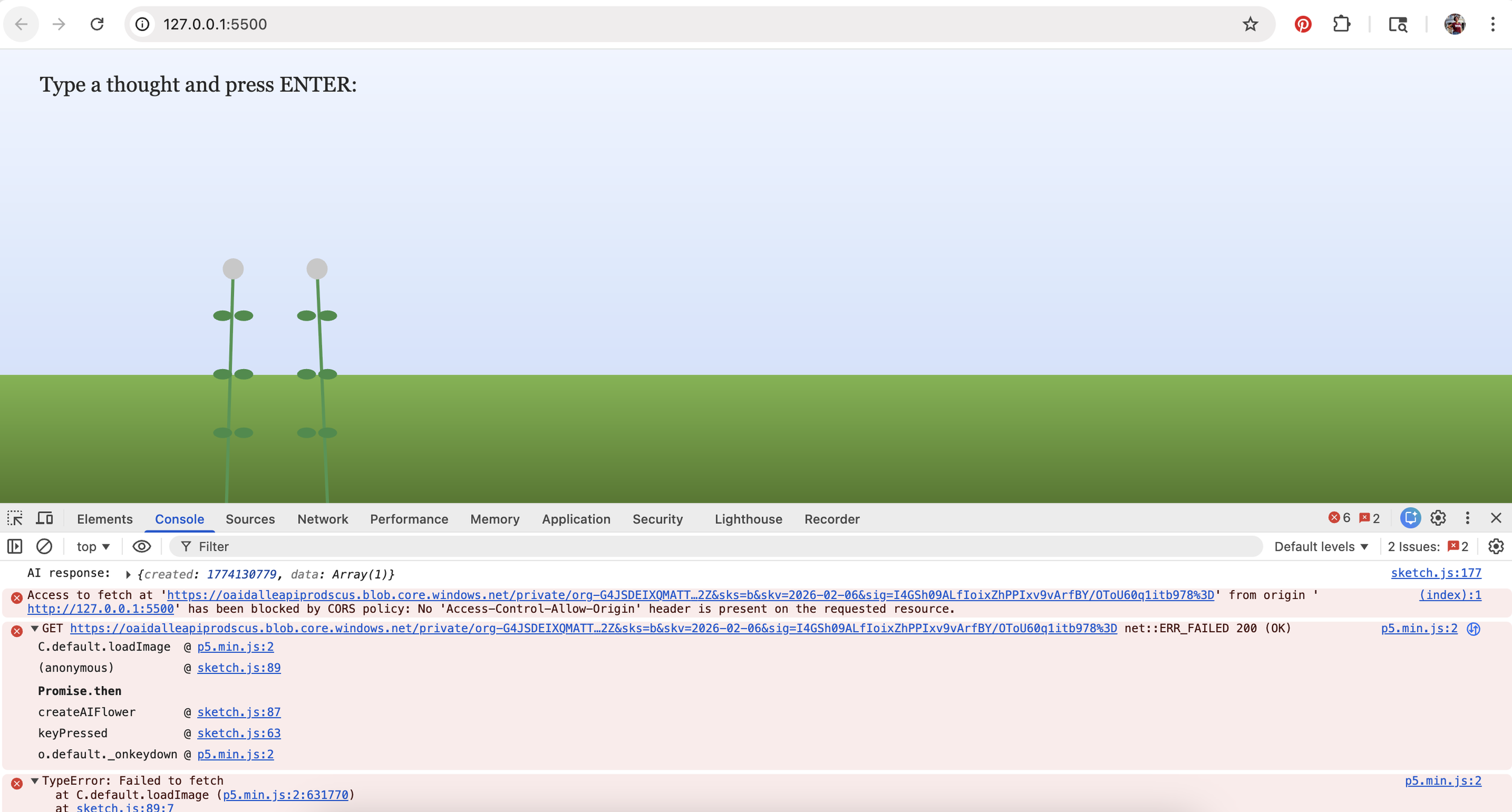

p5.js canvas rendering of all flowers, stems, leaves, butterflies, bees, and pollen

Flower growth animation frame by frame

Realistic butterfly rendering with bezier wings, body segments, and flap animation

Seasonal weather effects (rain, snow, falling leaves)

Procedural nature soundscape (wind, rustling leaves, birdsong, crickets) via Web Audio API

Body pose detection and gesture recognition via MediaPipe

Coordinate mapping from camera space to canvas space

Node.js proxy server routing API calls

-

Voice input detected automatically when a person enters the camera frame

Raising both arms triggers a bloom at the visitor's position on the wall

Holding a hand near a flower triggers the flower to speak

Sky, wind, and weather shift in response to each new emotion

Butterflies and bees spawn based on emotional tone

Garden grows and changes with every visitor interaction

Interactive Installation

The installation uses three gesture-based interactions detected through a live camera feed and a body pose recognition model running entirely in the browser. When a visitor enters the frame, the system detects their presence and activates the microphone automatically. There is no button to press. The visitor speaks naturally, and their words are transcribed in real time and held in memory. When they raise both arms above their shoulders, the garden blooms a flower at the position on the wall corresponding to where they are standing. If they hold a hand near an existing flower for a moment, that flower speaks. Claude generates a short poetic response in the voice of the flower, grounded in the specific emotion and thought that created it.